![]()

AI agents are moving quickly from experiments to everyday tools. They plan, call other services, interact with data, and sometimes act without a person watching every step. That creates value, but also new exposure. This post outlines the potential of AI agents, why their use is growing, the main governance challenges, and how Microsoft Purview can help you manage data risk, lineage, and policy at scale.

The potential of and with AI agents

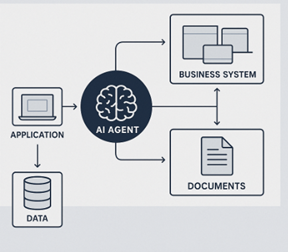

Agents extend beyond a single prompt and reply. They can read and write documents, call line of business systems, and collaborate with other agents. They help teams research, draft, summarize, and orchestrate tasks that used to require several people. As organizations adopt first party copilots, custom agents, and third party apps, these capabilities will spread across functions such as finance, operations, engineering, and customer service.

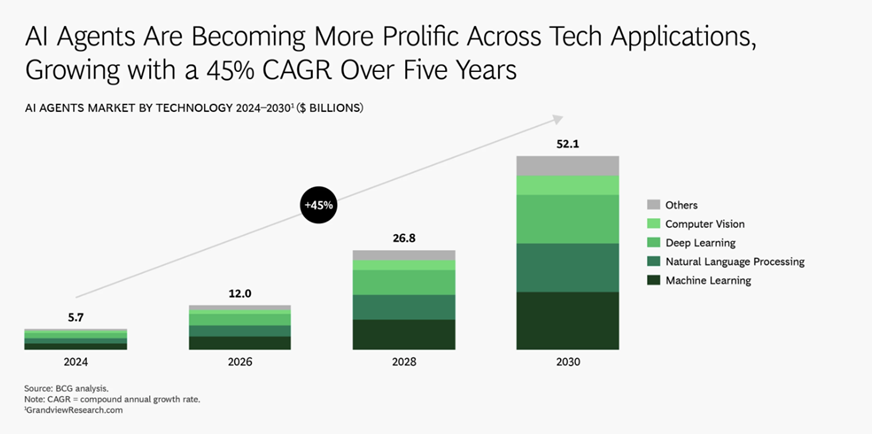

Expected growth in popularity

The adoption of AI Agents is increasing in popularity for many reasons: Many platforms now ship with agent features available out of the box, APIs and connections to a myriad of agentic tools. In many cases, internal teams can create their own agents faster than before using low code or no-code solutions. As a result, enterprises will face a mix of company-built agents, Microsoft copilots, and consumer AI apps accessed through browsers and browser extensions. Without a plan, this mix is hard to inventory, monitor, and control.

Key challenges with AI agents

AI agents change the risk model in several ways; They may process sensitive data in prompts and responses. They can call other agents or tools that may pass content further than intended and AI Agents may share information on a large scale. These and other factors raise questions about data loss prevention, lineage, retention, governance procedures and access management – but how do you address these challenges without suffocating productivity?

Data governance and lineage

Governance is a broad term, but in this context it refers to how you want visibility into how agents can be created, which agents exist, who uses them, what data they can access, and how agents process and share data. For lineage, you need to see which files or sources an agent reference, how sensitivity labels are respected and inherited and ensure access management is effective.

How Microsoft Purview helps

Microsoft Purview extends its data security and compliance features to AI apps and agents. Organizations can discover and govern interactions for Microsoft 365 Copilot and other copilots, custom built applications, and selected third party tools. Data Security Posture Management (DSPM) for AI gives a central place to view AI usage, assess risk, and create policies that control prompts and responses. Purview honors sensitivity labels so agents recognize protected content and can inherit labels in their outputs. That keeps access aligned with label permissions even when content moves across systems.

With Purview, you can bring AI interactions into the unified audit log, which supports investigations and eDiscovery. You can apply data loss prevention policies that evaluate prompts and responses in real time and block or redact sensitive information before it reaches a model or agent that should not have access to sensitive information. You can set retention (for how long chats etc. shall be kept) for AI interactions and include them in communication compliance. You can also review usage outside Microsoft 365 when agents or apps run in the browser and interact with unmanaged services, which helps you respond to shadow AI.

Working with custom agents and developer platforms

Enterprises often build agents with Copilot Studio, Azure AI Studio, or with other integrations like OpenAI integrations. Microsoft Purview integrations help developers and security teams align. In supported environments, Purview can recognize and honor labels on data that an app or agent accesses, inherit labels on generated content, and send governance signals into audit logs. This shortens the path to production because teams do not have to build custom logging and policy engines for every agent.

What to expect next

As AI agent adoption grows, inventory and policy scope will expand. You will need a single control plane that lists agents across first party, third party, and custom deployments. You will want baseline policies that apply to all agents, and targeted rules for specific use cases. You will likely want dashboards that show sensitive data trends in prompts and outputs, risky users, top agents by activity, and policy decisions over time.

How Infotechtion can help

Infotechtion helps organizations design and implement AI agent governance. We map business goals and risk appetite to a practical control set, configure Microsoft Purview for AI scenarios, and align employees on a shared process for moving agents from the drawing board to operationalization. We also establish reporting for usage, risk, and lineage, and train teams on how to properly use AI agents. If you plan to scale AI agents, we can help you set a clear foundation and move forward with confidence. Reach out to us at contact@infotechtion.com to hear more about what we can offer!

Bibliography

BCG (2025). AI Agents. AI Agents: https://www.bcg.com/capabilities/artificial-intelligence/ai-agents